Two designers, two approaches

In our experience, there are two basic ways that designers approach the creation of a website:

- The genius method

“I know exactly what to design, so I’ll just get on with it.” - The empirical method

“I know roughly where to start, so I’ll design, test, and then revise as needed.”

To illustrate this, let’s imagine two designers, Tom and Maria, who are both asked to design a medium-size content website for cycling – say about 300 pages of well-designed, clearly written information.

- Tom is from the school of genius design. He believes that he creates great websites because he is a talented designer and his past work was praised.

- Maria is from the school of empirical design. She believes she is a good designer, but she knows that she will not get everything right the first time, so she uses a variety of research tools to inform (and correct) her designs as she goes.

Tom and the genius method

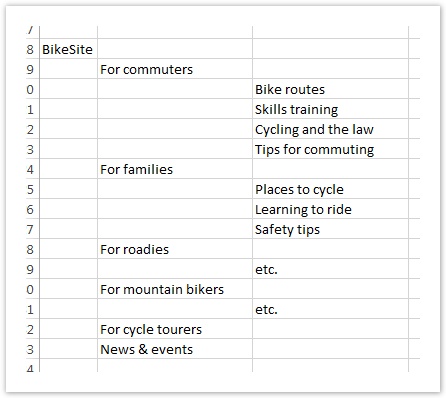

Having studied the content and talked to the site’s internal stakeholders, Tom thinks a lot, then opens a new spreadsheet and creates a text tree of possible headings and subheadings, based on different audiences:

When he reviews his tree ideas with others on the team, most of them like the audience-based scheme. They make some comments and suggestions, and he accordingly makes a few revisions before delivering the design.

Everyone is happy until several weeks after the site is released. The analytics show that certain parts of the site that expected heavy traffic are getting very little, while the web-feedback channel is choked with users complaining they can’t find this or that on the new site. (They love the new look and feel, but it’s much harder to find things now that everything has changed.) This feedback persists for several months.

"Way too much work to find what I want. Maps used to be all in one place, but now they're scattered all over the place." - a disgruntled site visitor

Eventually, a consultant is brought in to do some usability testing on the site and recommend fixes. One of the major issues she finds is that the site is organized in a way that makes sense to the project team, but not to some of their major audiences. Some of this can be fixed with simple terminology changes, but some of it will require fundamental changes to the site structure, which will in turn require a good deal of content rework.

No one (including Tom) wanted this result, but now that they know about it, it can be fixed in release 2 (if they can get funding for it).

"We were hoping to add a stolen-bike registry next, but that will have to wait until we solve some basic navigation issues." - site owner

Maria and the empirical method

Like Tom, Maria starts by studying the content and talking to the site’s internal stakeholders. And, like Tom, her initial instinct is an audience-based scheme.

She asks about any existing user research, and receives the results of a site survey done the previous year, which suggests some new content but doesn't give any clues to better ways to organize the site.

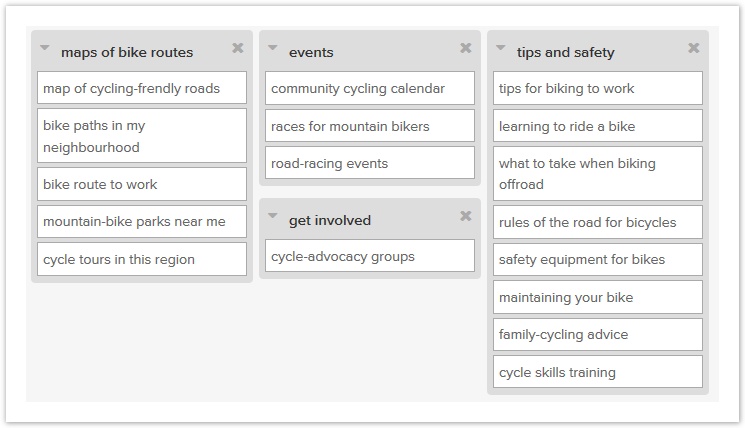

She has some ideas on how to structure the content, but she also knows that she is not the target audience, so she decides that she needs to get some user input to help generate structure ideas. She runs an open card sort online using some representative content, and discovers that most users actually organize the cards according to activity, not according to audience. Her initial hunch was wrong, but it’s easy to change direction this early in the game.

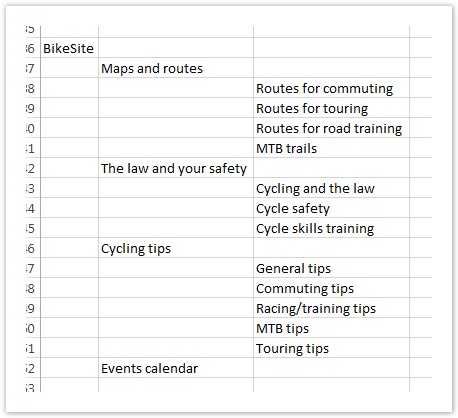

She then opens a new spreadsheet and creates a text tree of possible headings and subheadings, based on the various types of topics that the site offers:

Instead of finalizing the structure then and there, she wants some proof that a topic-based tree is better than her initial idea of an audience-based tree. So she creates an audience-based tree as well, and decides to test them against each other. (The project sponsor happens to prefer the audience scheme, so this is an additional reason to get objective data on both before deciding.)

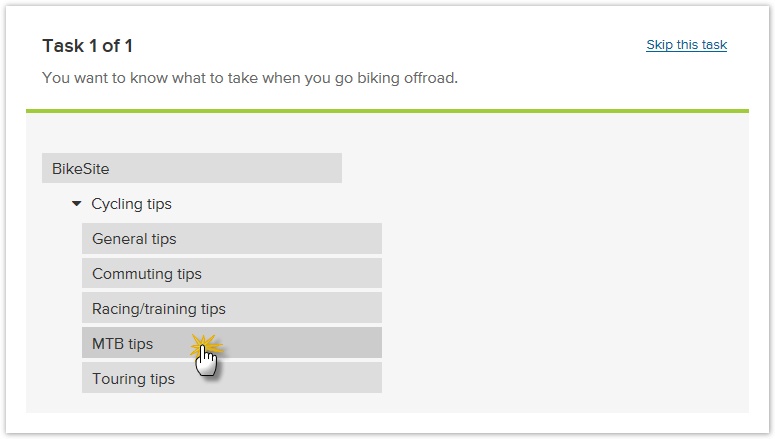

Maria spends a week running side-by-side tree tests (one for each tree), and gets about 100 people to try out each tree:

The results are revealing – the topic-based tree performs much better, except for a few tasks where the audience-based tree wins:

| Metric | Topic tree | Audience tree |

|---|---|---|

| Success rate | 72% | 55% |

| Directness | 77% | 64% |

| Average speed | 14 secs | 18 secs |

Maria reviews the results of the test with the team, and they agree to go with the topic tree, but incorporating some elements of the audience tree that worked particularly well. This is the structure they build the site with, and when they run a usability test on the alpha version, it performs well except for a few minor changes that they can make before the site is released.

Once the site is live, the analytics show the expected areas of traffic. A few users complain about not being able to find things because they’ve been moved, but these complaints dwindle after the first month as users become familiar with the new structure.

"Took a bit to get used to, but it's now much easier to find what I want." - a satisfied site visitor

Maria, her project team, and upper management are all happy with the new site:

"Great feedback from users, and traffic has jumped too. The committee was happy to give us the go-ahead for the new stuff in release 2." - site owner

The moral of our story

Don't dismiss this is a straw-man example; over the years we've seen an astonishing number of websites created using Tom’s single-design genius method (or something very close to it). The Toms of the design world may be talented, but they are often curiously reluctant to use empirical tools to test their designs before the website ships. And the odds of them getting everything right the first time are very low. That translates into a high risk for the organization.

Geniuses do have great ideas (that’s why they’re geniuses) but they also tend to think that all their ideas are good. Geniuses are also hard to find, sometimes hard to work with, and usually expensive. And they don’t come with guarantees.

On the other hand, designers like Maria who use empirical tools to improve their work lower the risk of delivering mistakes to users. By testing their ideas before the website is finished (or even before it’s started), they can see what works and what doesn’t for the intended audiences. Instead of doing “genius design”, they’re doing “user-centered” design.

We don't have to be geniuses to recommend the latter approach. ![]()

Next: Good IA starts with an effective site tree

Copyright © 2016 Dave O'Brien

This guide is covered by a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.